MultiDSLA mimics user behaviour

We have designed our test systems to interact with the phone network as the user does. The test design flexibility of MultiDSLA enables it to mimic some of the more complex interactions performed by users and the network – interactive voice response (IVR) is a good example.

IVR is essential in numerous applications – call centres, auto-attendants, voicemail and telephone banking for example. IVR tests can be run at specified intervals; in a production environment there may be concern about the resilience of the IVR platform in times of high call volume. Sequencing tests throughout the day to include both quiet and busy times will provide a detailed insight into IVR behaviour.

A word on prompts

The first thing you need when testing IVR response is a full set of the prompts in wave file format (*.wav) if these are available. Don’t worry if they are not, as you can make recordings from the network under test. The MultiDSLA system can be used to make these recordings – see ‘Recording prompts’ below.

What IVR behaviours do you want to test?

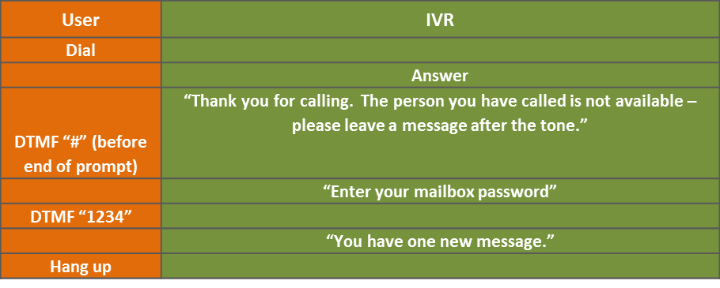

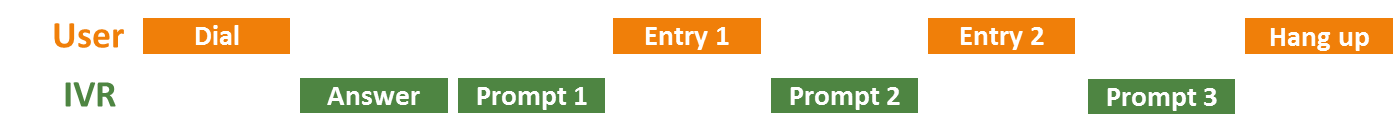

Figure 1: Typical User/IVR Interaction

Building blocks

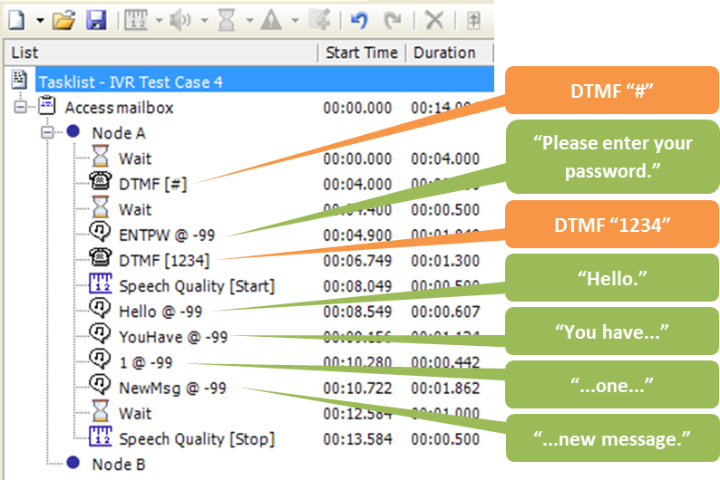

The test sequence in Figure 3 is typical of a building block for IVR testing.

PESQ or POLQA?

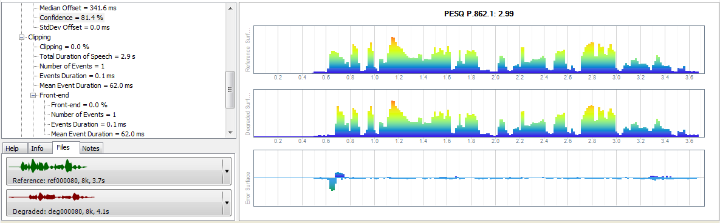

You can use either PESQ or POLQA to analyse IVR behaviour, but PESQ has two characteristics which make it more suitable for this application. First, PESQ is more sensitive to ‘clipping’ – where the beginning or end of a speech utterance is missing. Second, PESQ provides a ‘percentage confidence’ result (essentially how well the time characteristics of the expected and actual prompts match) which is a reliable indicator of whether the IVR system has played the expected prompt. The speech quality score – PESQ or POLQA – gives a good indication of match between the expected and the actual prompts, unless you are using recordings of prompts made from the network under test. In this case, neither PESQ nor POLQA will return a reliable score, and the PESQ Confidence indicator becomes invaluable.

Example

Figure 4 shows how MultiDSLA detects that the beginning of a prompt is missing. The prompt says something like “Thank you for calling the speech-enabled auto-attendant”, but the initial “th” sound is missing. PESQ reports a Confidence of over 80%*, suggesting that the correct prompt has been heard, but also shows that a 62ms portion is missing at the front end.

* A few sample measurements will usually be sufficient to determine the target percentage.

So how do I detect problems without working though thousands of graphs?

Simple – set an Alert to notify you when exceptions occur – for example you might choose to receive an Alert on Speech Level, on Confidence (see previous paragraph) or Speech Quality Score. Drill down to the graphs to confirm the exceptions and figure out what is happening. The audio replay feature is really powerful here.

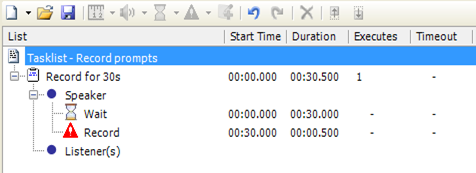

Recording prompts

If you want the test system to trigger the prompts, program the necessary steps (DTMF sequences and/or speech files and waits) in place of the Wait event. Once you have a recording, use a third party wave file editor such as Adobe Audition or Audacity to save prompts to separate files.

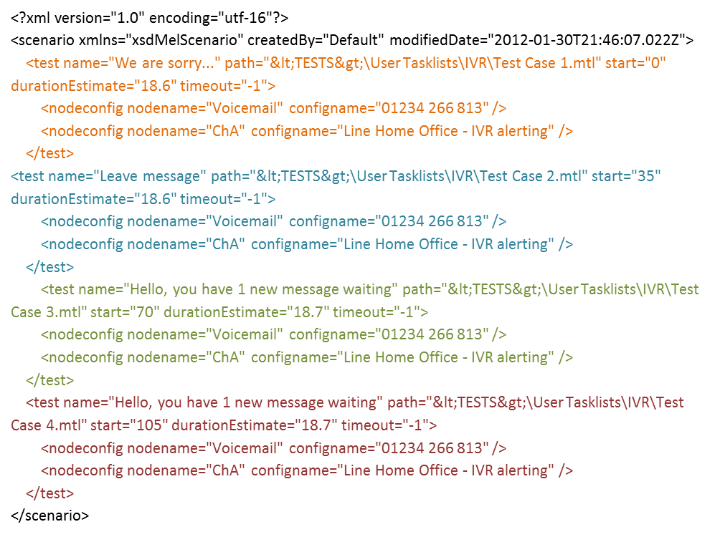

Using Scenarios to build a complete test

You may need to build a number of tasklists to test the different stages of the IVR transactions. Running these individually is tedious, but they can be combined in a Scenario such that they function in sequence. The example Scenario below shows how four tests have been combined to create a test suite which performs these steps:

There is no limit to the number of steps which can be included.

Contact Opale Systems or your distributor for more information.