Robotic Testing Revolution: Enhancing Device Quality Assurance for North American Mobile Operator

One of our North American Mobile Operator customers had been using MultiDSLA systems for several years, but one day presented us with a challenge. We like challenges, of course, so we were all ears. But when we saw the working environment, we were all eyes too!

Inside a room easily large enough for 50 people to work in, there were just a few engineers. And yet some 20 mobile devices were being put through their paces in a methodical and thorough manner. Were the engineers testing five devices each? Well, not exactly – the real work was being done by a squad of automated robot arms, each tapping away at the screen and buttons of a handset or tablet, and each housed in its own enclosure. We learned that the robots get to work on these unsuspecting devices and really put them through their paces – seeking out reliability issues, software bugs and all types of issues the Operator needs to know about before launching a new device or service.

The Challenge

Next came the challenge:

“We want to add voice quality assessment to the large range of tests we already do.”

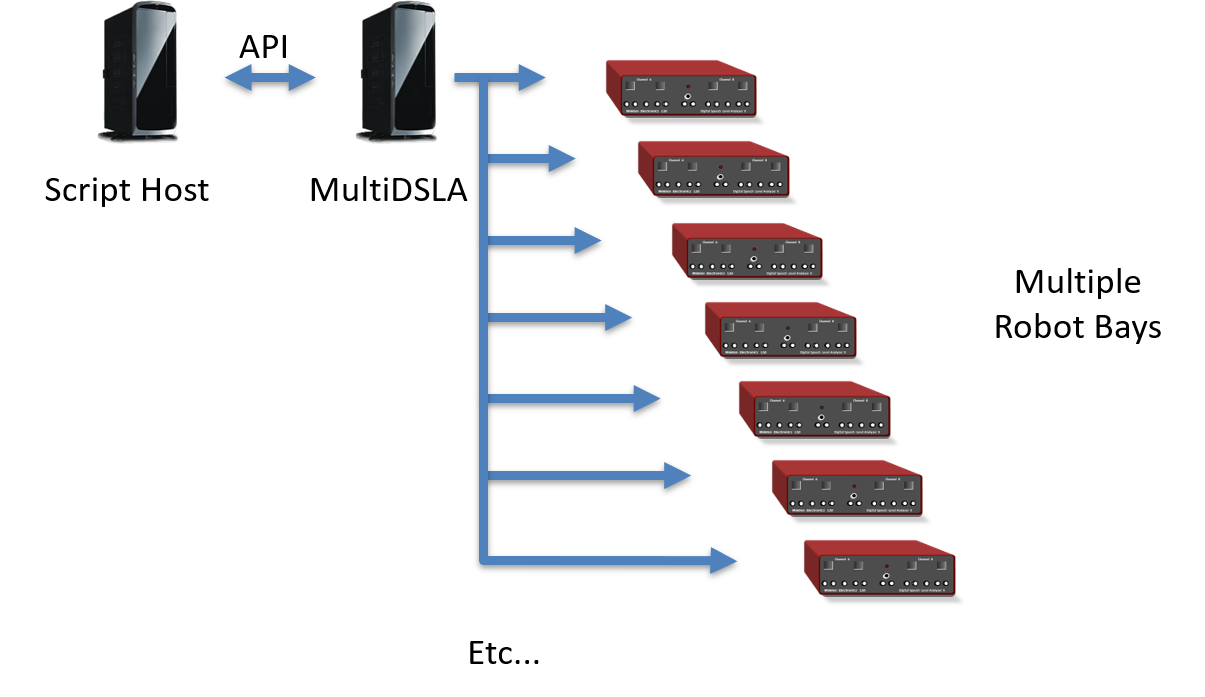

“Okay” we said, “we can do that.” As the discussions continued it became clear that the real goal would to be to allow each of the 20 or so robot stations to run through their tests asynchronously, since each could be working on a different device, whilst allowing overall control from a central PC. Oh yes, and without having 20 separate test systems, just one busy, multi-tasking system! Guess what? We still said, “Yes, MultiDSLA can do that!”

Proof of Concept: MultiDSLA’s Application Programming Interface (API)

The next step was for us to demonstrate how MultiDSLA’s Application Programming Interface (API) could be used to perform the essential steps required to meet the customer’s objectives:

- Log-on from the host scripting platform

- Determine test system availability/status for each robot bay

- Select which robot bay to use

- Select a pre-defined test to run, or design a test on the fly

- Execute a test with the defined number of execution cycles

- Determine the test status

- Extract the test results (POLQA scores and other data)

- Extract analytical results in the event of exceptions

This was quickly achieved, and we moved on to the next stage, where the customer would be ‘hands on’ with the MultiDSLA trial solution.

Evaluation Process: MultiDSLA API

Not surprisingly, the automation engineers were quick to appreciate the functionality of the MultiDSLA API. It took them a little longer to familiarize themselves with the test methodology of objective voice quality, the meaning of MOS, and so on. Opale was there to assist with practical advice and to help find the appropriate balance between functionality and test process efficiency.

System Architecture

Once the functionality, system stability and usability were all proven to the satisfaction of the engineers, it was time to take the discussion to the management to review the parameters of the proposed system.

The focus now turned from ‘commands’ and ‘results’ to:

- Architecture

- Scalability

- Lifecycle considerations

- Maintenance and Support model

- And of course, the cost

A Successful Outcome: MultiDSLA architecture, API, etc.

Opale Systems was awarded the supply contract for the voice quality test system, beating a significant competitor. The success factors were:

- Opale’s commitment to ensuring the ‘technical fit’ of the solution

- The flexibility of the MultiDSLA architecture

- The capabilities of the API

- An outstanding price/performance ratio

The Operator set a tight delivery window; Opale delivered with the required period and the system was duly rolled-out across the robot bays. Thanks to the thorough evaluation and Opale’s attention to the detailed requirements, roll-out was achieved without a single issue…

The objective of adding voice quality analysis to the robot bays was achieved almost overnight.

Contact Opale Systems or your distributor for more information.